Sneak Peek At Blender's DLSS Real-Time Denoiser — You Have To See This!

The Future is finally here!* (almost)

Deep Learning Super Sampling (DLSS) Ray Reconstruction might make its way into Blender (at least, it's in the works). And the potential is, without exaggeration, astonishing.

Unlike "traditional" denoisers, DLSS has a different way of reconstructing an image, and doesn't really care about scene lighting or polygonal complexity. Because of this, it's used in games to "upscale" a rather low-res natively-rendered output to a much higher-res target, like 4K or more. It's very effective and, ironically, despite being an upscaler, nowadays is almost synonymous with the "best quality" rendering preset in games. Simply because more and more devs get lazy and spend less effort on optimization, especially anti-aliasing techniques, simply "offloading" this task to a dedicated (and, unfortunately deeply proprietary) neuralnet upscaler like DLSS.

I bet like me, you have always wondered if this very approach could be used in a traditional 3DCG Creation app like Blender.

Well, wonder no more! Someone took an unfinished alpha version of Blender codebase with a DLSS integration, and compiled it into an actual test build.

Prepare to be amazed.

Blender 3.0 Removes OpenCL as Cycles GPU Rendering API

Sad news for the open-source and open-standards community coming from the Blender team:

OpenCL rendering support was removed. The combination of the limited Cycles kernel implementation, driver bugs, and stalled OpenCL standard has made maintenance too difficult. We are working with hardware vendors to bring back GPU rendering support on AMD and Intel GPUs, using others APIs.

At the same time CUDA implementation saw noticeable improvements in large part thanks to the better utulization of NVIDIA's own OptiX library:

- Rendering of hair as 3D curves (instead of ribbons) is significantly faster, by using the native OptiX curve support

- OptiX kernel compilation time was significantly reduced.

- Baking now supports hardware ray-tracing with OptiX.

So NVIDIA wins... TWICE:

GPU kernels and scheduling have been rewritten for better performance, with rendering often between 2-7x faster in real-world scenes.

Why Am I Not Celebrating This?

All of this once again displays the real world difficulties of developing, maintaining and promoting open-source alternatives to commercial Software designed by the manufacturer to utilize the hardware capabilities of their products to the max.

And the end result? We're falling deeper and deeper into the vendor lock trap and as the vendors keep turning their proprietary hardware-interfacing Software and APIs into state-of-art ready-to-use solutions, those which are developed in a "democratic" environment keep tripping over their shoelaces failing to get any traction on the market they set out to provide the alternative on.

This makes me grateful that there do exist open-source APIs that work, like OpenGL and Vulcan. But... Why are we still in a situation where Vulcan, "the next generation graphics and compute API" is still incapable of providing even the same level of functionality as the dreaded OpenCL so that it could finally offer a real alternative to commercial compute APIs? Why didn't Blender team even mention Vulcan as something they would look into as an alternative to OpenCL?

A million dollar question...

Blender Cycles + NVIDIA RTX = An Artist's Dream

Watch this:

Wow.

Now imagine yourself in the shoes of Autodesk management. You have hundreds of thousands of people who want to enter the 3DCG industry. You tell your shareholders: "Hey, let's take away professional and entry-level perpetual Software licenses and give our customers subscription as the only option. Yeah, subscriptions. The worst possible thing for a freelancer. What a great idea! It will sure stand the test of time!"

Now tell me: after a myriad of amazing Blender updates, more and more companies becoming sponsors of the Blender foundation, and now NVIDIA with their RTX + AI denoiser integrated into the Software this well...

Tell me how many more potential Autodesk clients will turn to Blender to never look back?

Don't ask me why my mind immediately went to Autodesk of all things... Probably because I will never forgive them for killing off Softimage and afterwards going subscription-only route.

Ahem, excuse me. Anyway...

Well done, Blender and NVIDIA!

Reality Check. What Is NVIDIA RTX Technology? What Is DirectX DXR? Here's What They Can and Cannot Do

NVIDIA and its partners, as well as AAA-developers and game engine gurus like Epic Games, keep throwing their impressive demos at us at an accelerating rate.

These feature the recently announced real-time ray tracing tool-set of Microsoft DirectX 12 as well as the (claimed) performance benefits proposed by NVIDIA's proprietary RTX technology available in their Volta GPU lineup, which in theory should give the developers new tools for achieving never before seen realism in games and real-time visual applications.

There's a demo by the Epic team I found particularly impressive:

Looking at these beautiful images one can expect NVIDIA RTX and DirectX DXR to do more than they are actually capable of. Some might even think that the time has come when we can ray trace the whole scene in real-time and say good bye to the good old rasterization.

There's an excellent article available at PC Perspective you should definitely check out if you're interested in the current state of the technology and the relationship between Microsoft DirectX Raytracing and NVIDIA RTX, which without any explanation can be quite confusing, seeing how NVIDIA heavily focuses on the native hardware-accelerated tech which RTX is, whist Microsoft stresses out that DirecX DXR is an extension of an existing DX tool-set and compatible with all of the future certified DX12-capable graphics cards (since the world of computer graphics doesn't revolve solely around NVIDIA and its products, you know).

So here I am to quickly summarize what RTX and DXR are really capable of at the moment of writing and what they are good (and not so good) for.

NVIDIA Volta + Microsoft DX12 = Real-time ray-tracing. Finally?

Whenever I hear the term "real-time ray-tracing", I immediately think of some of the earlier RTRT experiments done by Ray Tracey and OTOY Brigade. You know, those impressive, yet noisy and not quite real-time demos with mirror-only reflections and lots a lots of convolving noisy rendered frames.

Like this one:

I wouldn't dream of seeing something actually ray-traced in real-time at 30fps without any noise in the upcoming 5-10 years. Little did I know, NVIDIA and Microsoft had the same idea and put their best minds to the task.

Results? Well...

Delivered 100%.

This demo, developed by EA (believe it or not) is running on NVIDIA's newest lineup of VOLTA GPUs, which means that VOLTA is also on the way! Yay! NVIDIA RTX tech sure looks promising.

Can you imagine what will happen to offline CUDA ray-tracers following this announcement? Hopefully their devs will be able to make this amazing tech a part of the rendering pipeline ASAP. Otherwise, C'est la vie: you've been REKT by a real-time ray-tracing solution.

Just kidding. We gotta test this thing out first and only then will be able to tell whether we've been led to believe in yet another fairy tale or that you need like 8 VOLTAS to run this demo which would be a let down.

NVIDIA PhysX FleX now compatible with the majority of modern graphics cards

In case you missed the announcement, here's some truly great news:

"To better serve the game development community we now offer Direct3D 11/12 implementations of the Flex solver in addition to our existing CUDA solver. This allows Flex to run across vendors on all D3D11 class GPUs. Direct3D gives great performance across a wide range of devices and supports the full Flex feature set."

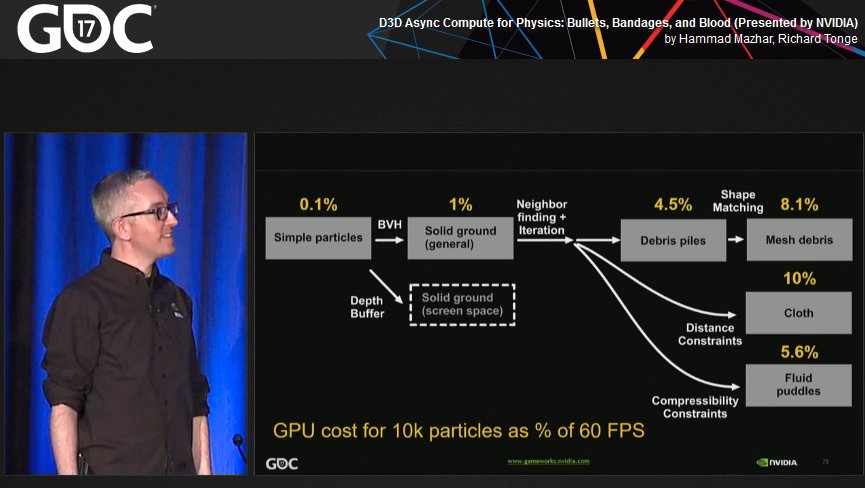

Yes! FleX is now not a CUDA-only physics library! NVIDIA devs have also utilized Async Compute to make it as efficient as possible with D3D.

Check out the GDC talk.

Hopefully we'll start seeing more FleX in the upcoming AAA-titles (or maybe even indie ones?.. Who knows!)

Nvidia Physx Flex and Other Fluid Solvers for High-Quality Fluid Simulation

Beware the strawberry milkshake monster!

Beware the strawberry milkshake monster!

Simulations are hard.

When it comes to doing simulations on meshes with a finite number of vertices it's relatively easy to achieve desired results. But as soon as you try taming hundreds of thousands or even millions of particles, you're in trouble. Especially when it comes to doing fluid simulations. You need a special kind of solver, a powerful rig or a network of rigs and a lot of patience. It took me by surprise how difficult seemingly trivial simulations can be.

In the animated film I'm working on I will have bodies of water large and small and certain gaseous liquids in the background for increased production value.

If you're a freelancer or a hobbyist on a budget in need to simulate some fluids, off-the-shelf tools available on the market can be a good choice... But there are so many of them that finding out their differences as well as pros and cons is a quest in itself. In this post I'll explore some of the ways an amateur like me can do various fluid-like simulations and what technologies there are to help get the job done.

The big guns

I'll briefly cover two of perhaps the most well known and renowned fluid sims on the market - Naiad and Realflow.

There was the time when you could only purchase a single Naiad license for 5500$ or rent it quarterly for about 1400$. Luckily those times are over since in 2012 Naiad was sold over to Autodesk and turned into Maya Bifrost. So now you can get your hands on Naiad tech within maya for just $185 a month. You can find out more about Bifrost in this blog post at Digitaltutors. It's a powerful FLIP solver (more on this method below) and well integrated into Maya too with GPU caching and an ability to playback tens of thousands or even millions of particles in real-time directly within the DCC as well as a variety of tools for artistic direction of your simulations.

Then there's Realflow, which comes with several solvers for you to choose (SPH, PBF, HYBRIDO) and with its Dyverso particle solver (the one which uses PBF) gives you the ability to simulate on CPU or GPU, the latter using OpenCL for computations. You can read more about Realflow's solvers here. Overall, Realflow isn't terribly slow and well scalable when you give it lots of cores to work with, but as soon as you realize your hardware limitations and the fact that the cheapest single-seat license with the C4D integration costs over 750 bucks you start looking for other solutions.

Other freeware and commercial tools for fluid simulation

I won't spend too much time on different types of solvers available on the market, only mention some of them for the sake of argument. There's an excellent (albeit slightly dated) article on the subject at fxguide explaining them in detail if you're interested in finding out more.