I'd like to present the results of a study I conducted recently:

Story

Working on the animated short film I am constantly updating a list of tasks to do in order to achieve a descent final result. One particular topic took my sleep away recently when I remembered that in order to correct skinning errors on animated characters you'd either have to sculpt and then script a bunch of blend shapes or would need a muscle system available in your DCC software.

I then turned to Maya, which has had a muscle system for ages.

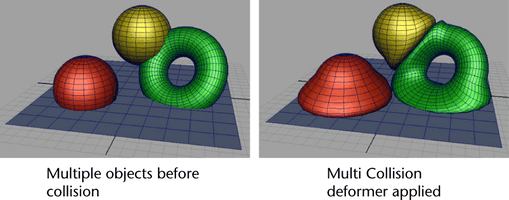

Maya Muscle system

Maya provides several deformers to manage soft- and hard-tissue interaction between muscles like smart-, self- and multi-object collisions. Even though they aren't terribly performant, they get the job done.

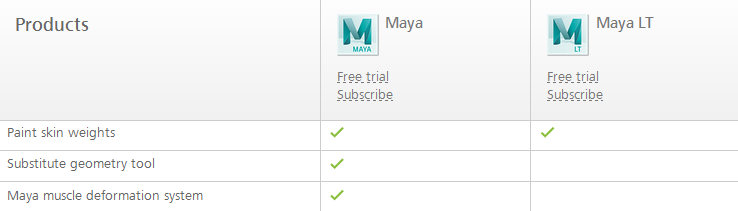

Unfortunately as soon as I would've used any of Maya's Muscle system tools I'd be tied down to a 185$ a month subscription plan for the whole duration of the project which would be outrageous. There is a lite-version of Maya available for indie gamedevs — Maya LT — which only costs 30$ a month, but it doesn't come with the Muscle system.

Hence I had to improvise and develop a simple and straightforward solution for this problem in a DCC of my choice.

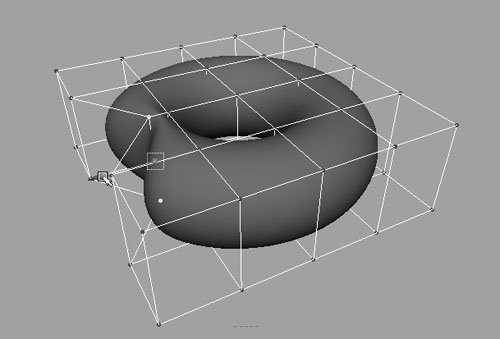

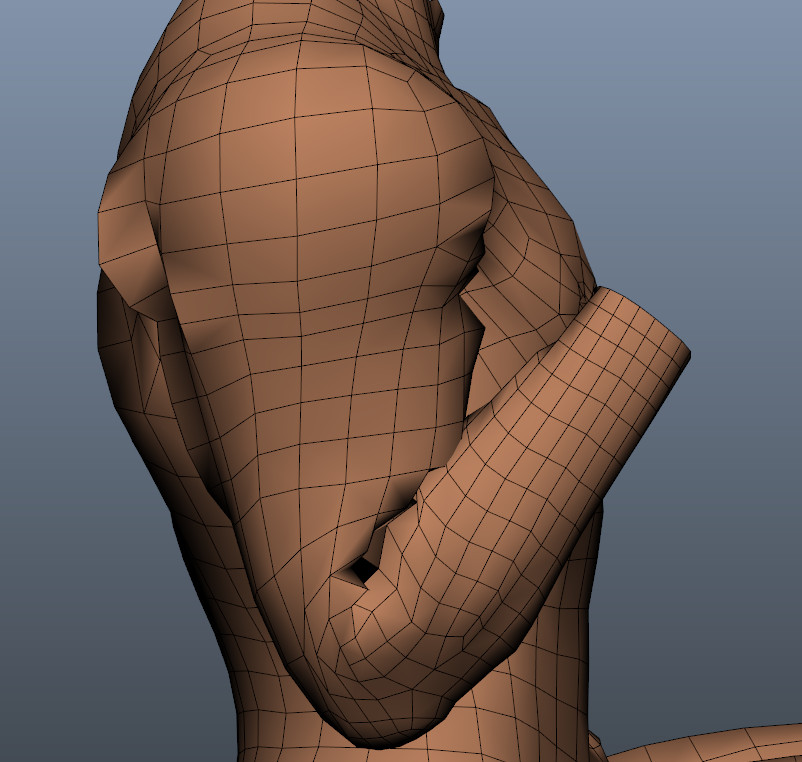

Basic muscle using a cage or an envelope

Alas, Softimage doesn't provide a ready-to-use muscle system off the shelf. The good news is that any object can be cage-deformed or skinned to any other object in Softimage, so creating "muscles" would be as simple as placing spheres and capsules around the rig, parenting or constraining them to bones and adding a cage deformer on top of a skin envelope. Then all you do is animate the shapes of those muscles based on bone position or rotation.

Cumbersome, but doable.

You can then take it further and add a dynamic jiggle to your muscle objects using ICE Verlet Integration.

What it doesn't help with, however, is simulating collisions between the muscles and the skin. Muscles deform upon collision in real life. Since they also have skin attached to them you get skin collision management "for free". So we need to find a way to collide those muscles on our character rigs.

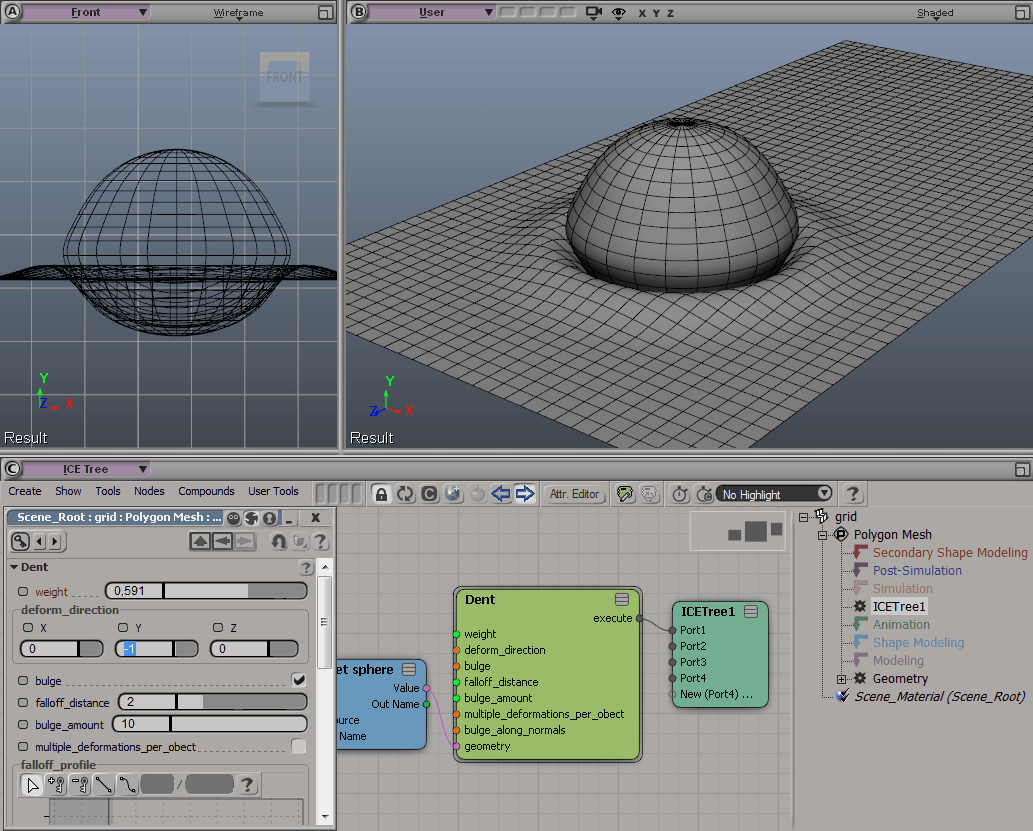

Softimage ICE

Those familiar with Softimage ICE would say: "Just use ICE to do this". Unfortunately I'm no Technical Director and my knowledge of ICE is limited. It would take me quite a while to build an ICE-tree that would manage collisions between objects as Maya muscle does. Hell, even if you look through Softimage ICE sample scenes the only solution that comes close to doing this is the Bi-directional mesh-to-mesh deformation or dent, but it doesn't use normals to calculate point positions and requires you to explicitly specify axes which you need the object to deform along.

I do believe that with the help of Verlet Integration it could be possible, but recreating something as complex as Maya's muscle collision system with ICE or Fabric Engine is a project in itself and just not an option in my case.

After a bit of digging I did discover a free ICE-powered muscle system called VorleX Muscle available for Softimage. Tried it out and as powerful as it was, the only type of collision it supported was unidirectional muscle object deformation where collider objects didn't deform – only the muscle did. So no muscle-to-muscle deformations here either...

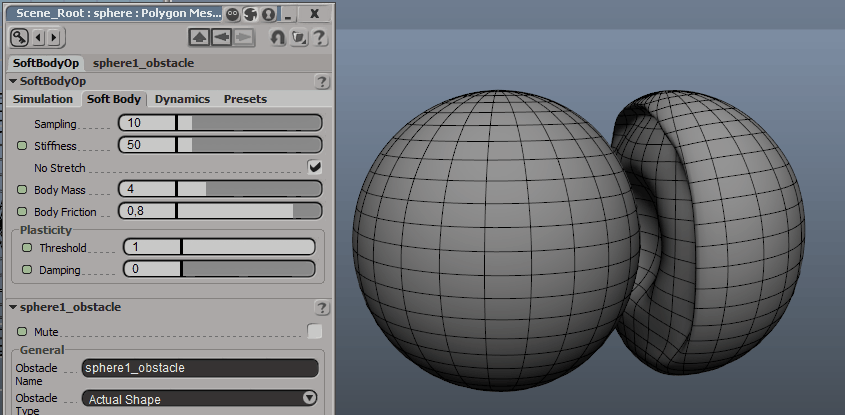

Soft body simulation

Another way of achieving artist-directed collision is adding soft-body dynamics simulation on top on an existing object animation which, believe it or not, is perfectly possible in Softimage if you're willing to bite the bullet and use the legacy simulation tool-set. There's an article on the Softimage wiki explaining how it can be done.

This method has some serious drawbacks though:

- Legacy animations are single-threaded.

- Animation-driven bi-directional interaction between two soft bodies is notoriously difficult to set up and even if you manage to do it, you will get strange behaviors when soft bodies actually collide.

- I find legacy soft body simulation not very flexible (get it?) when it comes to body properties. I.e. there is no way to add internal-pressure-like force which is a problem when one soft body squashes the other one like a wobbly rubber ball. You just can't make a muscle with that.

- Legacy simulations can be and often are quite unstable. And that's frustrating as hell.

To sum up: I tried this method and failed miserably. Not going to touch XSI legacy simulation system ever again.

Blend shapes...

Well... When everything else fails, as a last resort you can always sculpt blend shapes and interpolate between them, but this is tedious and requires a bit of scripting. Besides, you would have to do it for each character separately. If you have a team of artists it's fine. But if you're alone and have a deadline looming in the distance or simply can't afford to dedicate too much time for the project, it turns ugly.

Another way

After all of those failed attempts I thought to myself: if creating object-to-object deformations is so complicated and sculpting blend shapes is so time-consuming, why not look for the other way? Like something that would do this for you automatically (more or less) and still produce a descent result.

I got to work and after a series of experiments finally managed to develop a method that allowed to create somewhat believable skin collisions for animation and was very easy to setup and use - a combination of Cloth simulation and a Delta Mush deformer.

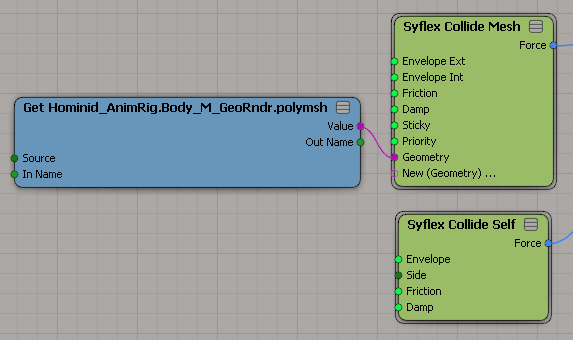

Cloth skin simulation

Idea of using cloth simulation for skin is anything but new. Syflex devs posted a tutorial on how to do this over a decade ago.

What they didn't cover in the tutorial though was self-collisions simply because when used this way they would in many cases cause serious simulation flickering on collider intersections.

You could of course fix this with a basic Smooth deformer, but the amount of smoothing required to ease those surface flickers out would completely destroy any if not all surface detail on your mesh.

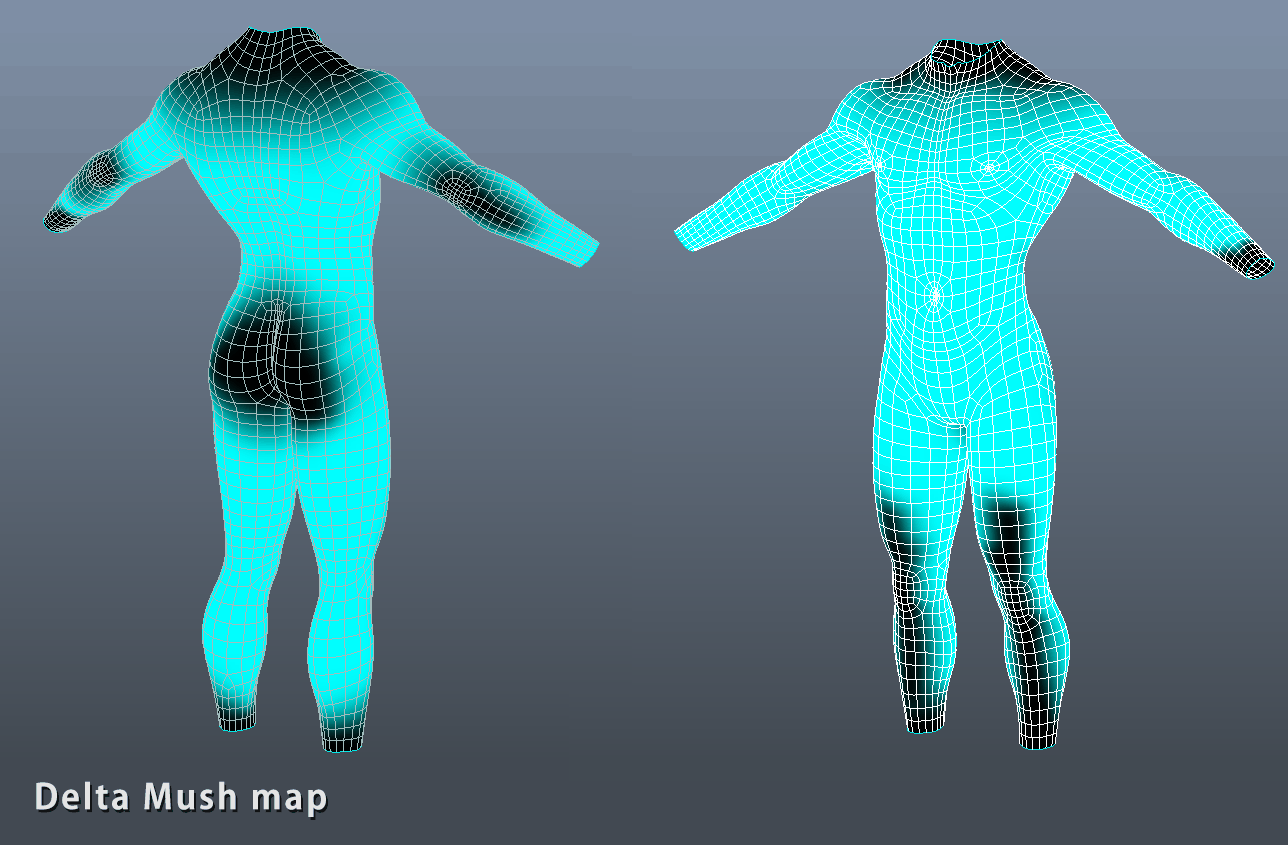

Mush it up

Luckily for us in 2014 guys from Rhythm & Hues Studios presented their proprietary deformer at Siggraph 2014. They called it "Delta Mush".

This is how it works in a nutshell: local mesh detail (per point offsets from a smoothed to the original undeformed mesh) is precomputed and stored. Then the "to-be-fixed-deformer" is applied, directly after which the mesh is smoothed again with the same smoothing algorithm. This removes newly introduced detail which could potentially include unwanted artifacts from the deformer. Finally, the stored offsets are applied, restoring the original detail.

You can get the paper here.

And here's the presentation:

As you can see, this is exactly what we need to correct simulation flickering (they actually show it in the video above. Shocking!)

Even luckier is the fact that there's not one, but two Delta Mush implementations for Softimage! One by Miquel Campos - a purely ICE-powered set of compounds - and Guillaume Baratte's C++ implementation that is much faster and allows attenuation of the effect by a weightmap. Thanks guys!

Another piece of good news is that Syflex cloth simulation engine that is integrated into Softimage is programmed in a such way that when cloth gets caught between two objects it can still try to avoid self-intersections if you achieve the right balance between the values of the friction of the colliding objects (base envelope, for example) and the one on the self-collision node on the cloth skin. Those are just force nodes after all.

Armed with these powerful tools I set out to experiment and after a series of attempts managed to more or less achieve what I longed for: automated full-body-ready self-collision handling for soft-tissue envelopes.

Does it work?

Granted, the result is not perfect (as most automated things are in the world of 3D), but it's fast to set up and chances are that viewers won't even notice that collisions aren't physically correct in the animation. Hell, you can almost get away with having a simple quaternion envelope without corrective shapes as a base for the simulation as long as you don't approach extreme bone angles. This way you won't have to mess with muscles or corrective blend shapes for envelope collisions, which is good news especially when working on background characters.

This method has not been production-tested yet, but when the time comes I will definitely integrate it into my character pipeline and share the results.

To summarize

The method is both good and bad as are most things in life.

The good:

- Quick and easy to set up.

- Will work on any soft-tissue type deforming object and calculate collisions across the whole mesh.

- Can be used as a base to quickly create blend shapes. You could for example use this method to create one blend shape with extreme poses for all joints and then divide it into clusters which then would be driven by appropriate joints or drive different areas of the blend shape using a weightmap.

- Not too slow to solve even for the whole body (example mesh I used in the video solved at a rate of about 0.3-0.5 frames per second with all collisions and Delta Mush deformer live on the mesh).

- The more dense the mesh, the better the deformation quality (well, duh!)

The bad:

- Solve speed heavily depends of the density of the mesh (as it does for any cloth and soft body simulation). Also, the smoother you want the simulation to be, the higher you will need to set the damping values which might considerably increase the number of required computations.

- This method is not (in theory) suitable for extreme close-ups because of how the simulated cloth unwraps and relaxes after extreme poses. You can actually notice this in the video - look for slight skin jittering under the armpit as the arm joint rotates back to the bind pose. In most cases you can only see this if you look at the actual mesh in the viewport, but in cases where characters might have tattoos you might see some warping and jittering of skin textures.

- Solving very small meshes with dense polygons can be a problem. For example this method will not work for the fingers and the face because of the local mesh density being much higher than that of the rest of the body. Hence you will need to solve the body separately from the head, hands and feet and then recombine the two (or three) meshes into one for seamless smoothing of the whole body for rendering.

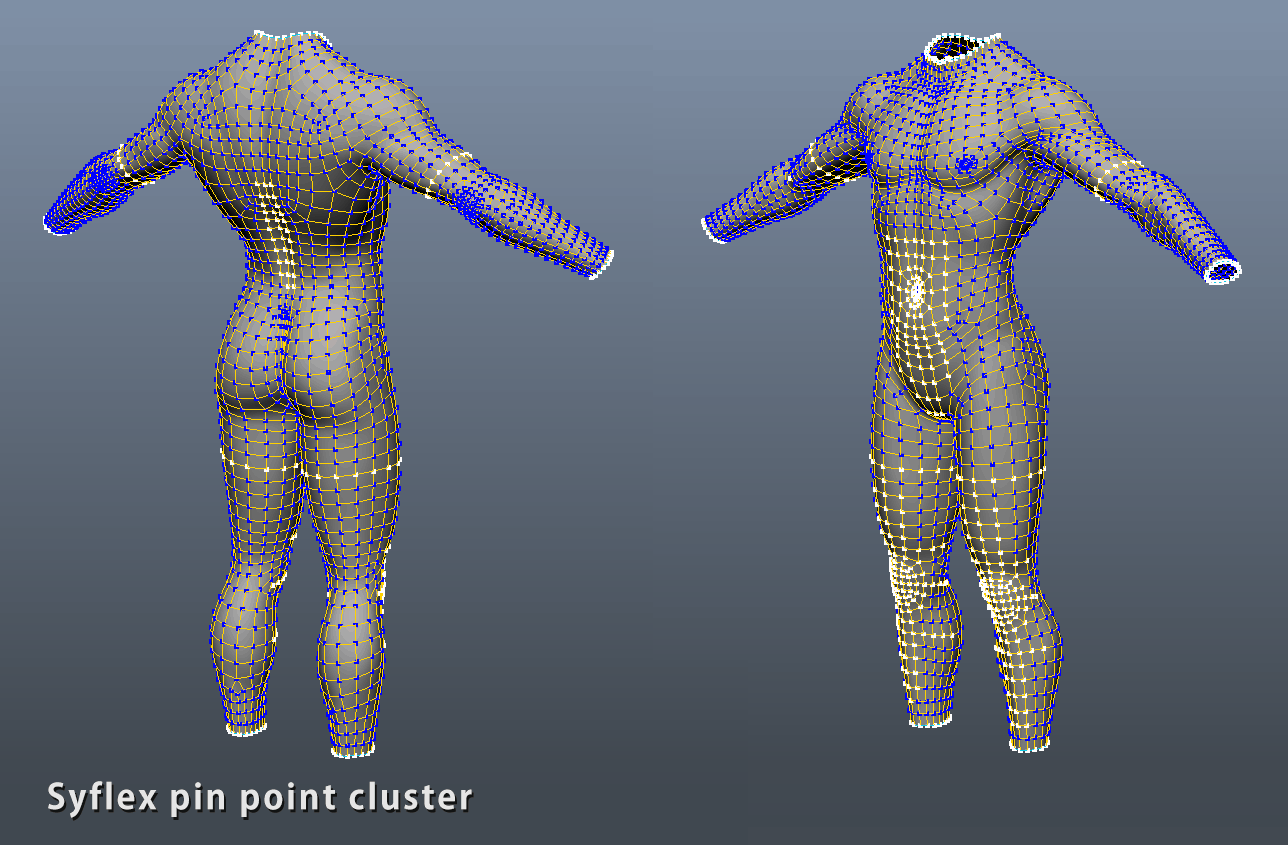

- To compensate for the mesh volume loss coming from the Delta Mush deformer you will need to paint a weightmap or two. You might also need to pin the cloth skin to your envelope here and there to make sure simmed skin doesn't get too far from the original skinned mesh during simulation. Not a big con, but it's there.

What's next

For the moment I'm happy with the results and will move on to other tasks and studies which should ultimately lead to the creation of a finished animated piece.

What do you think of this method? Any advice or criticism would be appreciated.