Vicon's VR multiplayer escape room at GDC 2018 featuring Shōgun 1.2

This year at GDC Vicon will be demonstrating its new Shōgun 1.2 MoCap platform. At its core it's an optical MoCap solution (which is quite different from inertial ones like Perception Neuron and is commonly used in professional production).

![]()

Vicon's Shogun and Shōgun Live are well known MoCap solutions used by Hollywood filmmakers and AAA-game devs worldwide.

This time the company comes to GDC with a treat: they will be coupling Shōgun with VR headsets to immerse GDC visitors into interactive virtual worlds in what they are calling a "VR multiplayer escape room".

What's even cooler is as far as I can tell, all MoCap data will be directly streamed into Unreal Engine 4. I believe this will make the engine even more popular among game devs, especially those interested in VR applications (sorry, Unity).

Anyway, if this is something you might be interested in, you can read more about the event at Vicon's official website.

Unity and Unreal Engine: Real-Time Rendering VS Traditional 3DCG Rendering Approach

(Revised and updated as of June 2020)

Preamble

Before reading any further, please find the time to watch these. I promise, you won't regret it:

Now let's analyze what we just saw and make some important decisions. Let's begin with how all of this could be achieved with a "traditional" 3D CG approach and why it might not be the best path to follow in the year 2020 and up.

Linear pipeline and the One Man Crew problem

I touched upon this topic in one of my previous posts.

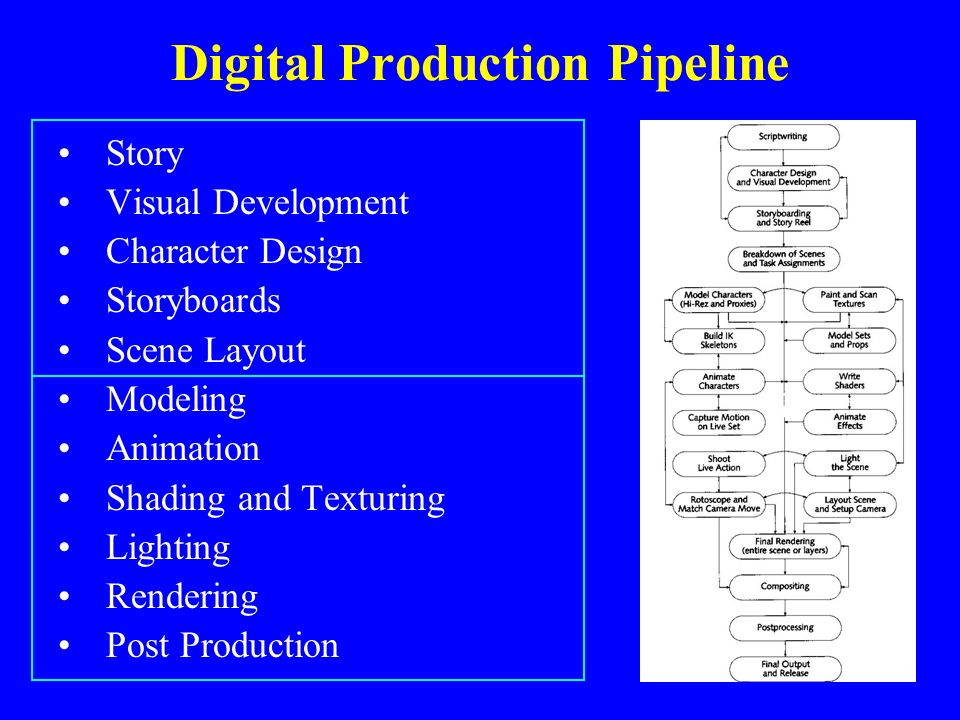

The "traditional" 3D CG-animated movie production pipeline is quite complicated. Not taking pre-production and animation/modeling/shading stages into consideration, it's a well-known fact that an A-grade animated film treats every camera angle as a "shot" and these shots differ a lot in requirements. Most of the time character and environment maps and even rigs would need to be tailored specifically for each one of those.

Shot-to-shot character shading differences in the same scene in The Adventures of Tintin (2011)

Shot-to-shot character shading differences in the same scene in The Adventures of Tintin (2011)For example if a shot features a close-up of a character's face there is no need to subdivide the character's body each frame and feed it to the renderer, but it also means the facial rig needs to have more controls as well as the face probably requires an additional triangle or two and a couple of extra animation controls/blendshapes as well as displacement/normal maps for wrinkles and such.

But the worst thing is that the traditional pipeline is inherently linear.

Thus you will only see pretty production-quality level images very late into the production process. Especially if you are relying on path-tracing rendering engines and lack computing power to be able to massively render out hundreds of frames. I mean, we are talking about an animated feature that runs at 24 frames per second. For a short 8-plus-minute film this translates into over 12 thousand still frames. And those aren't your straight-out-of-the-renderer beauty pictures. Each final frame is a composite of several separate render passes as well as special effects and other elements sprinkled on top.

Now imagine that at a later stage of the production you or the director decides to make adjustments. Well, shit. All of those comps you rendered out and polished in AE or Nuke? Worthless. Update your scenes, re-bake your simulations and render, render, render those passes all over again. Then comp. Then post.

Sounds fun, no?

You can imagine how much time it would take one illiterate amateur to plan and carry out all of the shots in such a manner. It would be just silly to attempt such a feat.

Therefore, the bar of what I consider acceptable given the resources available at my disposal keeps getting...

Lower.

There! I finally said it! It's called reality check, okay? It's a good thing. Admitting you have a problem is the first step towards a solution, right?

Right!?..

Oups, wrong picture

Oups, wrong pictureAll is not lost and it's certainly not the time to give up.

Am I still going to make use of Blend Shapes to improve facial animation? Absolutely, since animation is the most important aspect of any animated film.

But am I going to do realistic fluid simulation for large bodies of water (ocean and ocean shore in my case)? No. Not any more. I'll settle for procedural Tessendorf Waves. Like in this RND I did some time ago:

Will I go over-the-top with cloth simulation for characters in all scenes? Nope. It's surprising how often you can get away with skinned or bone-rigged clothes instead of actually simulating those or even make use of real-time solvers on mid-poly meshes without even caching the results... But now I'm getting a bit ahead of myself...

Luckily, there is a way to introduce the "fun" factor back into the process!

And the contemporary off-the-shelf game engines may provide a solution.